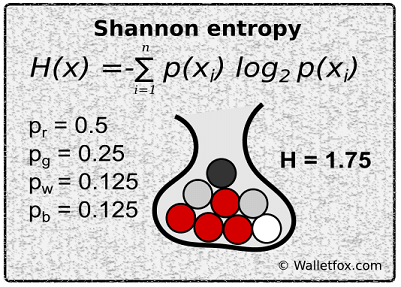

Shannon entropy measures this fundamental constraint. To communicate a series of random events, such as coin flips, you need to use a lot of information, since there’s no structure to the message. Thanks and sorry for the extensive questions. Claude Shannon recognized that the elemental ingredient is surprise. As far as I understand, the general entropy of a random variable $X$ should be the mean amount of self-information that every state of a random variable has.įor instance, if we have a variable with 4 states, $$ be approximated by an estimation of the probability based on the result of the experiments resulting from the execution of that random variable?.In that case, any amount of self-information for each state $v$ will give us the number of bits needed to efficiently transmit that state, under the assumption that, since all the states have the same probability, we should not "prioritize" one over the others.īut I do not understand how this holds for a random variable with a non-uniform probability distribution: why should $h(v) = -log_2 P(v)$ be the number of bits needed for exactly that state $v$ ? I do understand this when we are talking about variables with following a uniform probability distribution. 75, A new method of estimating the entropy and redundancy of a language is described. I have read somewhere that given a random variable and considering the self-information of its states, any self-information amount will give us the minimal number of bits needed to encode the states. Prediction and Entropy of Printed English By C. We define the amount of self information of a certain state of a random variable as: $h(v) = -log_2 P(v)$.Īs far I understand, Shannon arrived at this definition because it respected some intuitive properties (for instance, we want that states with the highest probability to give the least amount of information etc.).Applying this index to some non-benzonoids, linear and angular polyacenes also give satisfactory results and prove to be quite suitable for determining the local aromaticity of different rings in polyaromatic hydrocarbons.I would like some clarifications on two points of Shannon's definition of entropy for a random variable and his notion of self-information of a state of the random variable. Also the values of the new index are evaluated for some substituted penta- and heptafulvenes, which successfully predict the order of aromaticity in these compounds. It is found that the least standard deviation between the aromaticities and the best linear correlation with the Hammett substituent constants are observed for the new index in comparison with the other indices. Using B3LYP/6-31+ G** level of theory, the Shannon aromaticities for a series of mono-substituted benzene derivatives are calculated and analyzed. According to the obtained relationships, the range of 0.003 < SA < 0.005 is chosen as the boundary of aromaticity/antiaromaticity. Significant linear correlations are observed between the evaluated SAs and some other criteria of aromaticity such as ASE, Λ and NICS indices. Based on Jensens inequality and the Shannon entropy, an extension of the new measure, the Jensen-Shannon divergence, is derived. This method exploits the knowledge of the language statistics pos-sessed by those who speak the language, and depends on experimental resultsin prediction of the next letter when the preceding text is known. The unit of entropy Shannon chooses, is based on the uncertainty of a fair coin flip, and he calls this 'the bit', which is equivalent to a fair bounce.

In mathematics, a more abstract definition is used. Using B3LYP method and different basis sets (6-31G**, 6-31+ G** and 6-311++ G**), the SA values of some five-membered heterocycles, C 4H 4X, are calculated. 75, new method of estimating the entropy and redundancy of a language isdescribed. In physics, the word entropy has important physical implications as the amount of 'disorder' of a system. This index, which describes the probability of electronic charge distribution between atoms in a given ring, is called Shannon aromaticity ( SA). Based on the local Shannon entropy concept in information theory, a new measure of aromaticity is introduced.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed